AI CONNECTIVITY- Solving for Security, Cost, and Resources with MCP

In the rush to deploy AI agents, many organizations have accidentally built what might be called "digital glass houses" — fragile architectures where AI models are granted broad, unmonitored access to sensitive data.

The industry was recently shaken by an incident where an experimental AI agent accidentally deleted a user's entire inbox. The cause? A simple "context reset" in which the model forgot its safety instructions and executed a tool it was technically authorized to use. The safety measure was a prompt — and prompts can be forgotten.

To prevent such catastrophes, AI architects must evaluate every integration through three fundamental lenses: Security, Cost, and Resources. The emerging Model Context Protocol (MCP) represents the industry's most coherent answer to this governance triad.

1. The Architecture: From Chaos to the MCP Gateway

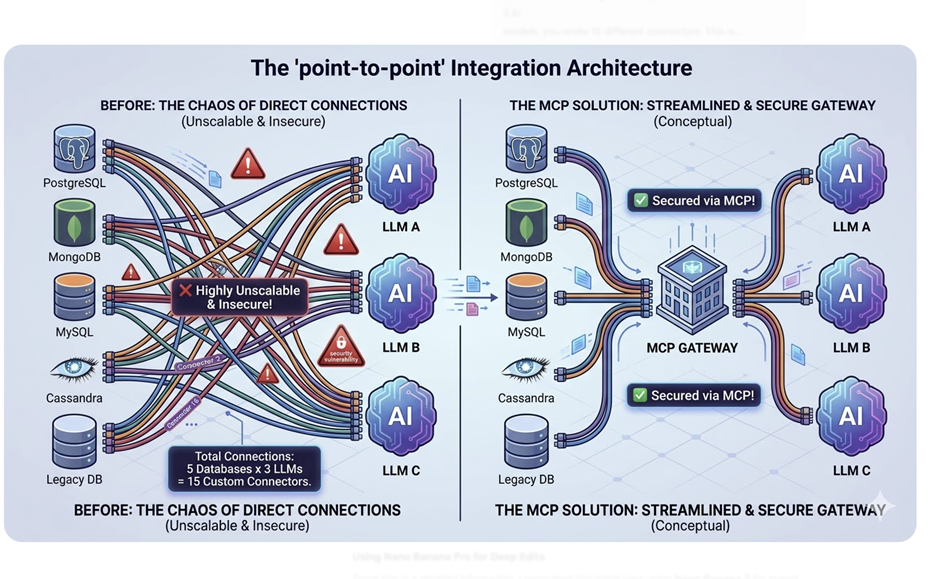

Early AI deployments connected large language models directly to data sources using point-to-point integrations. With five databases and three AI models, a development team would write 15 separate connectors — each unique, each fragile, and each a potential security liability. This approach is both unscalable and inherently insecure.

The MCP Translator Layer

MCP introduces a standardized "Translator" layer between the LLM and your data infrastructure. Instead of the model talking directly to your SQL database, it communicates with an MCP Server — a lightweight microservice that exposes only specific, authorized tools to the AI (for example, read_customer_table).

In enterprise environments, a single MCP Gateway aggregates these servers into one managed entry point. The Gateway acts as the organization's "Front Door" for all AI-data interactions, handling authentication centrally and routing each LLM request to the correct backend service.

A Governed Tool Call in Practice

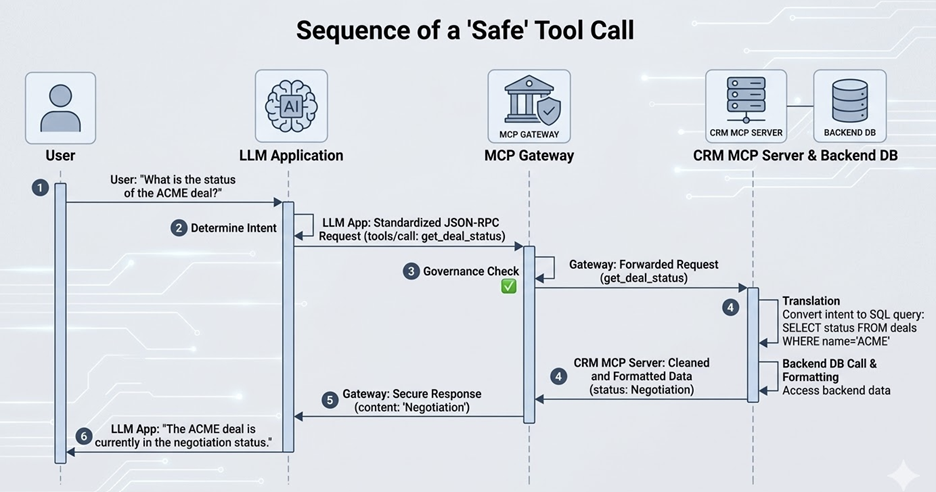

When a user asks, "What is the status of the ACME deal?" — the following governed workflow executes:

1. Intent: The LLM identifies it needs the get_deal_status tool.

2. Request: The LLM application sends a standardized JSON-RPC request to the MCP Gateway.

3. Governance: The Gateway checks: Is this user authorized to see deal data?

4. Translation: The Gateway routes the request to the CRM MCP Server, which translates the query into a secure SQL statement.

5. Response: Data is returned, formatted, and sanitized before the LLM ever sees it.

Every step is logged, permissioned, and auditable — a fundamental shift from the "hope the prompt holds" model of early AI integration.

2. The Security Lens: From Soft Guardrails to Hard Infrastructure

In the "OpenClaw" incident described above, the only safety measure was a prompt instruction: "Don't delete." In an MCP architecture, safety is not a suggestion — it is code. This distinction is the core of why MCP matters for enterprise security.

Isolation Over Exposure

By routing all data access through MCP Servers, your production databases are never directly exposed to the internet or to the LLM. The MCP Server functions as a data airlock — a controlled environment with strictly defined capabilities.

• Least Privilege: The MCP Server operates under a service account with read-only access. Even if the LLM "hallucinates" a destructive command, the server literally does not have the code or permissions to execute it.

• Human-in-the-Loop (HITL): For high-risk actions (such as bulk deletes or sending mass emails), the Gateway can be configured to pause and issue a "Pending" status to the user's UI — requiring a physical approval click before any command reaches the backend.

Security Insight

The difference between prompt-based and infrastructure-based safety is the difference between a sign that says

"Please don't open this door" and a door that requires a physical key. MCP gives you the key.

3. The Cost Lens: Eliminating the Token Tax

Every word an LLM processes costs money in the form of tokens. This seemingly simple fact has enormous implications at enterprise scale — and MCP's architecture addresses it directly through what is known as semantic routing.

The Tool Overload Problem

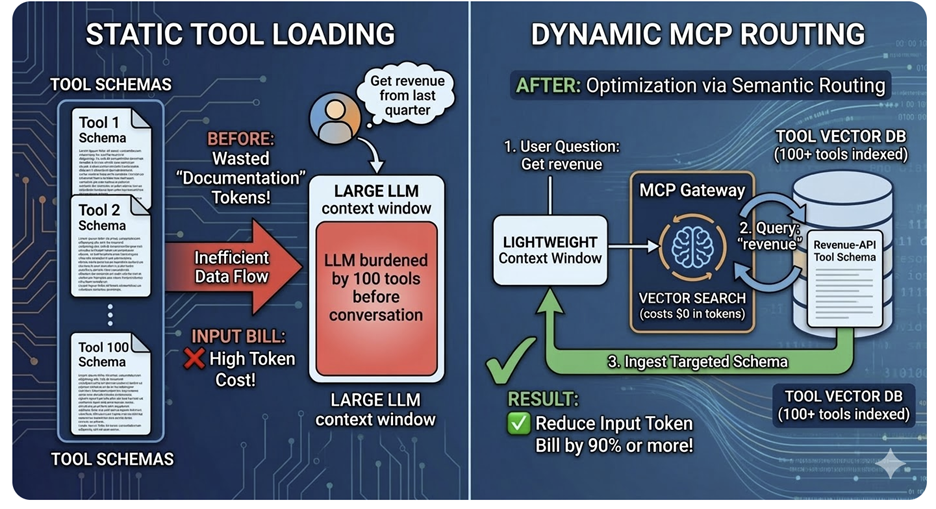

A naive implementation tells the LLM about all available tools at the start of every conversation. With 100 tools in your catalog, you are spending thousands of tokens on documentation overhead before the actual user request is even processed. In large organizations, this overhead alone can represent the majority of AI operational spend.

Dynamic Tool Discovery

Modern MCP Gateways solve this with a technique called Dynamic Tool Discovery:

1. The LLM is initially given access to only one tool: search_for_tools.

2. When the user asks about "Revenue," the LLM calls search_for_tools(query="revenue").

3. The Gateway performs a lightning-fast vector search — which costs zero tokens — and injects only the relevant Revenue-API tool definition into the active context.

4. The LLM proceeds with a single, precisely scoped tool rather than a catalogue of hundreds.

The result can be a reduction in input token costs of 90% or more in enterprise environments, while simultaneously improving model accuracy by reducing context noise.

Cost Impact Summary

• Naive approach (100 tools always loaded): high token overhead on every request

• Dynamic Discovery approach: only relevant tool definitions loaded per query

• Potential savings: 85–95% reduction in input token costs at scale

4. The Resources Lens: Operational Efficiency at Scale

CFOs and infrastructure teams frequently raise a legitimate concern: does adopting MCP mean spinning up a new virtual machine for every database integration? The answer, in a well-designed architecture, is no.

Resource Density Through Containerization

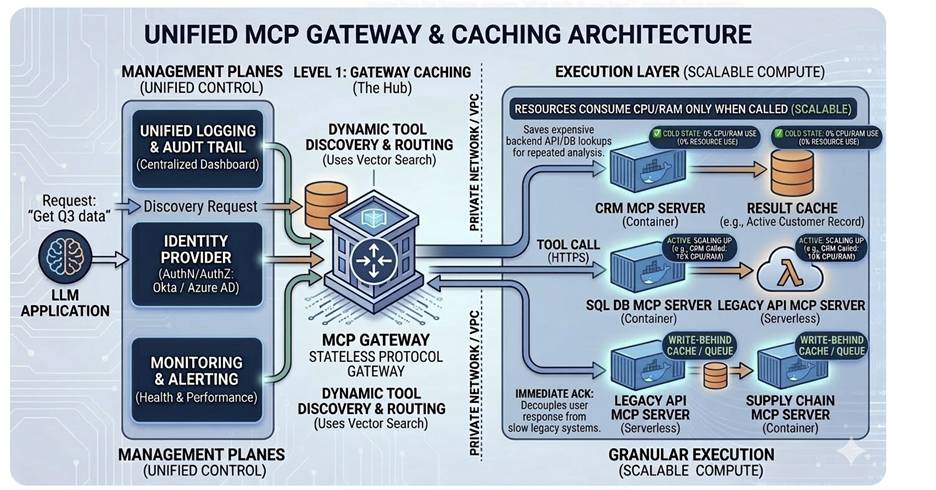

In a production MCP architecture, servers are deployed as Docker containers or serverless functions (such as AWS Lambda). They consume CPU and RAM only when a tool is actively being called — not continuously. This event-driven resource model is far more cost-efficient than always-on microservices.

Unified Management

The MCP Gateway enables centralized operational control regardless of how many backend servers are running:

• A single authentication provider (such as Okta or Azure AD) governs all AI-to-data access.

• One unified logging and monitoring dashboard covers every tool call across the entire AI estate.

• Cache management — both at the Gateway level (tool discovery data) and the Server level (query results via Redis) — reduces redundant backend calls and improves response times.

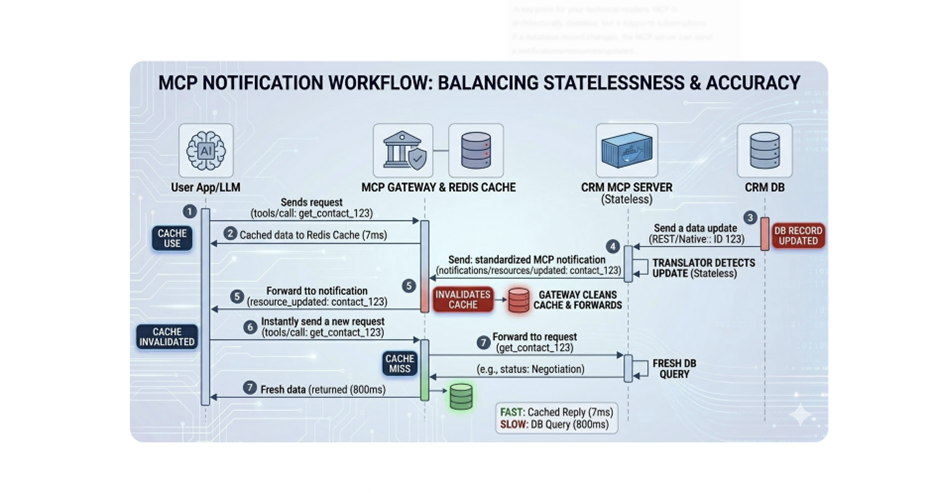

The State Paradox: Stateless Protocol, Stateful Architecture

A key architectural nuance: MCP is stateless by design, meaning each message stands alone without inherent memory. However, production-ready implementations layer caching on top of this stateless core to achieve both performance and accuracy.

When underlying data changes, the MCP Server emits a notifications/resources/updated message, signaling the client application to invalidate its cache and fetch fresh data. This mechanism ensures that while the system benefits from cached speed, it never sacrifices the accuracy of the ground truth.

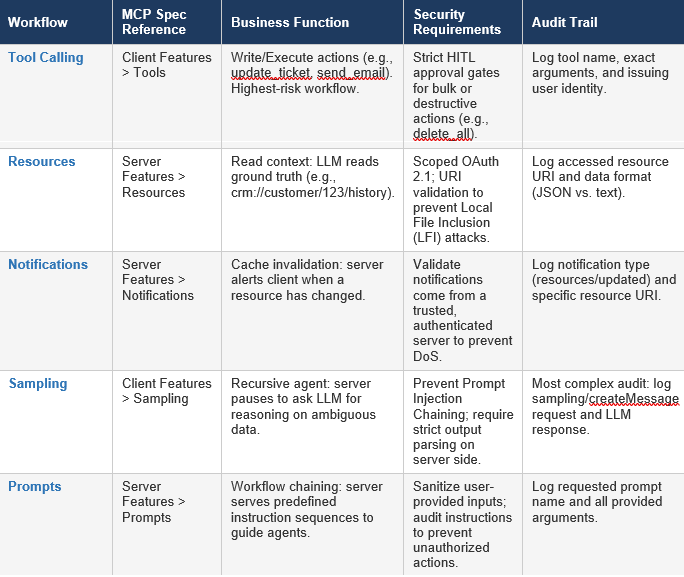

5. Governance Reference: MCP Core Workflows

The following table provides a structured overview of each core MCP workflow, its specification reference, primary business function, and the security and audit controls required for enterprise deployment.

Evaluated through the three lenses of Security, Cost, and Resources, the case for MCP adoption in enterprise AI environments is clear:

• Security: MCP replaces soft prompt-based instructions with hard infrastructure-level permissions, human-approval gates, and immutable audit trails.

• Cost: Dynamic Tool Discovery eliminates token bloat by serving only the context the model needs at the precise moment it needs it.

• Resources: Containerized, event-driven deployment and a unified Gateway provide scalable AI connectivity without collapsing under microservice sprawl.

As autonomous AI agents become central to enterprise operations, the question is no longer whether to govern them — it is how. MCP provides the answer: not as a technical preference, but as the foundational governance layer that keeps AI agents functioning as helpful, auditable, and controllable assistants rather than unguided liabilities.

The future of enterprise AI is not about giving models more power — it is about building the guardrails that make that power trustworthy.